The Fastest Way to Kill an AI Project..

Why AI initiatives fail in conference rooms!

If you want to kill an AI project…

Invite 26 people.

Put them in a conference room.

Ask, “So… what do we think about this AI use case?”

And then watch the project slowly turn into a group therapy session.

I met with a senior leader today, and she said something so simple and so true!

Most AI projects fail because too many people sit around chirping opinions.

Not because people are unhelpful.

Because the room fills up with opinions and runs out of owners.

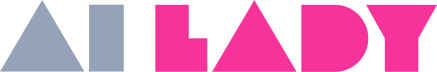

Ants. Yes, ants.

A professor at the Weizmann Institute of Science ran a classic “piano movers” puzzle: maneuvering a large, oddly shaped object through a tight, constrained space.

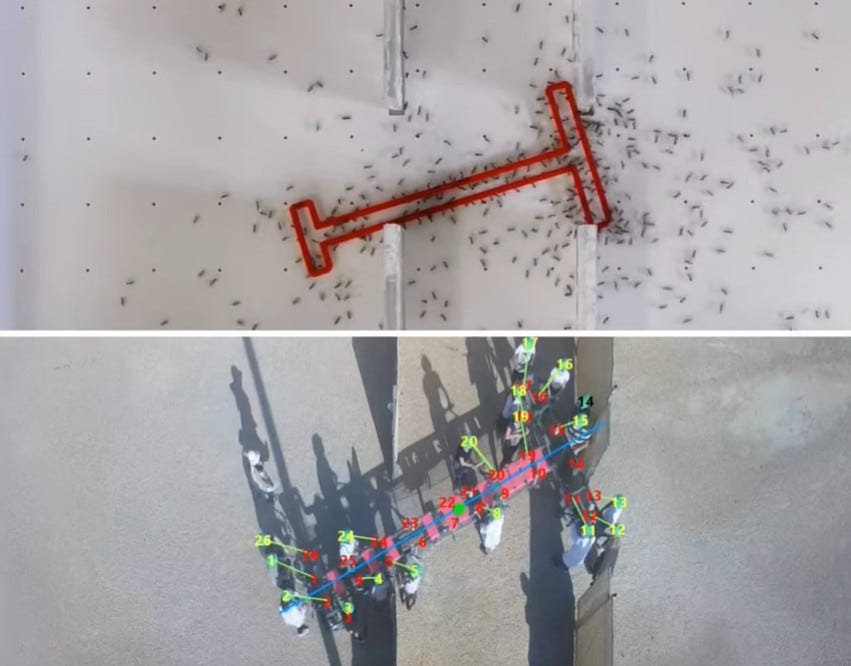

He tested ants versus humans.

Here’s what happened:

Ants, in groups, got dramatically better.

Humans, in groups, did not.

Ant teams scaled beautifully- groups of around ~80 ants got dramatically more effective while large human groups (about 16–26 people) didn’t improve in the same way, and sometimes performed worse than the best individual in the group.

When communication was restricted, human performance deteriorated.

The ants didn’t debate about hypothetical failures.

They moved.

They relied on movement feedback, and what the researchers describe as a kind of shared memory - sliding the object along walls in a near-deterministic way.

Humans did what humans do.

They debated. They conflicted. They tried to align. They often optimized for consensus over efficiency. They discussed random & hypothetical risks.

Which is… painfully familiar.

Because corporate AI projects are basically “piano movers puzzles” in disguise:

tight constraints (data, privacy, workflows)

uncertainty hence high risk (AI isn’t deterministic)

high visibility

high fear of being blamed

And our default response is to add more people.

More stakeholders. More meetings. More “alignment.” More people to pass the puck.

But the experiment suggests something uncomfortable:

More brains in the room doesn’t automatically create collective intelligence.

Often times it just creates collective drag.

The real enemy of AI projects isn’t the technology

AI work is messy. It needs iteration. It needs feedback loops. It needs decisions that can be reversed quickly.

Big rooms are bad at that because they produce:

unique strategies (everyone has a different mental model)

conflict disguised as “We need to align on XYZ and ABC”

risk inflation (“what if this goes wrong?” read “How do we avoid getting blamed?” and that becomes the project)

consensus gravity (the group drifts to the most obvious, safest, suboptimal path - nobody hates it but nobody loves it.)

When ownership is fuzzy, people focus on being involved - not on being correct.

That’s how you end up with 26 people “contributing” and no one delivering.

A quick story from the field

We recently went live with an AI tool, and it worked because we stayed disciplined about the humans.

We kept the working team lean.

Not “small for the sake of small.”